Turn Your Camera into an AI Brain: RDK X5 Object Detection with YOLO

Last Updated on December 18, 2025 by Engr. Shahzada Fahad

Table of Contents

D-Robotics RDK X5:

Let me start with a simple question.

What if your camera could decide when to turn something on?

Previously, we controlled an LED through a graphical user interface.

But today, the LED will react automatically… based on what the camera sees.

So, in this tutorial, you will learn how to implement RDK X5 object detection using a camera and landmarks from YOLO deep learning models.

We will build a real-time AI system that detects people and objects and automatically controls hardware devices like LEDs, relays, or buzzers.

This guide covers camera setup, SSH & VNC access, real-time object detection, and GPIO automation on the D-Robotics RDK X5.

By the end of this article, you will know how to detect any object from the entire coco.names list; and use those detections to control real-world devices. I am using an LED on pin 37 as an example.

But the real magic happens when we bring in YOLO.

We are creating a smart two-zone system: Normal and Prohibited.

And the moment someone enters the prohibited zone… the LED instantly lights up.

And of course, you can replace the LED with anything; a buzzer, a relay, or even a camera trigger. Once your system can detect a person and switch a pin… your automation projects suddenly become unlimited.

Amazon Links:

*Please Note: These are affiliate links. I may make a commission if you buy the components through these links. I would appreciate your support in this way!

Before we get started, I want to give a huge shout-out to Meshnology for sending over the RDK X5 Developer Kit. Their support makes articles like this possible!

If you haven’t checked them out yet, seriously; take a minute and visit their website at meshnology.com. They have an amazing lineup of embedded systems, development boards, IoT solutions, and so many cool products that makers, engineers, and hobbyists will love. Highly recommended!

Right now, I am on the desktop; just like in the previous article.

I can control everything directly on the RDK X5 using a keyboard and mouse…

but today, let’s make things a little more interesting.

Instead of sitting in front of the board, why not access it directly from a laptop?

This single change gives us two massive advantages:

Number one:

You can install your system in a safe, permanent location and access it remotely whenever you want.

You can run different codes, make changes, test new features—all without physically touching the device.

Whether you are in another room, in your studio, or relaxing on your bed, you can control the entire RDK X5 setup with complete freedom.

Number two:

If you are a content creator like me, this workflow is a game-changer.

Every command you run, every tweak you make; you can document it instantly on your laptop.

You can download any file or folder from the RDK X5 directly, keep everything organized, and work much faster without breaking your creative flow.

Number Three:

Screen recording becomes so much easier.

Instead of pointing a camera at a display or capturing low-quality footage, you can record your laptop screen directly.

So today, not only are we upgrading our LED control using image processing and smart detection zones…

We are also upgrading the way we interact with the RDK X5 itself.

Enable SSH and VNC for RDK X5 Object Detection Development

So, let’s begin by doing the most important thing—enabling SSH and VNC.

Because before we can control the RDK X5 from our laptop, we need a secure and reliable way to access it remotely.

Let’s set that up first.

Simply right-click anywhere on the desktop and select “Open Terminal Here.”

Now type this command:

|

1 |

sudo srpi-config |

Go to Interface Options, and here you have three choices.

You can enable only SSH, which gives you remote command-line access, or

you can enable only VNC, which gives you a full graphical desktop remotely, or;

my personal recommendation; you can enable both.

I have both enabled for a reason.

When you are using VNC, you can control the desktop just like you are sitting in front of the RDK X5…

but you can’t directly download files or folders from the device to your laptop.

Maybe there’s some hidden workaround; I am not sure; but I haven’t found one that works smoothly.

And that’s exactly where SSH becomes incredibly powerful.

Through SSH, you can transfer any file or folder from the RDK X5 to your laptop in just a few seconds.

For now, go ahead and enable both options.

I already have SSH enabled, and you will enable VNC the exact same way.

And with that… we are done with the RDK X5 side of the setup.

Now, on the laptop side;

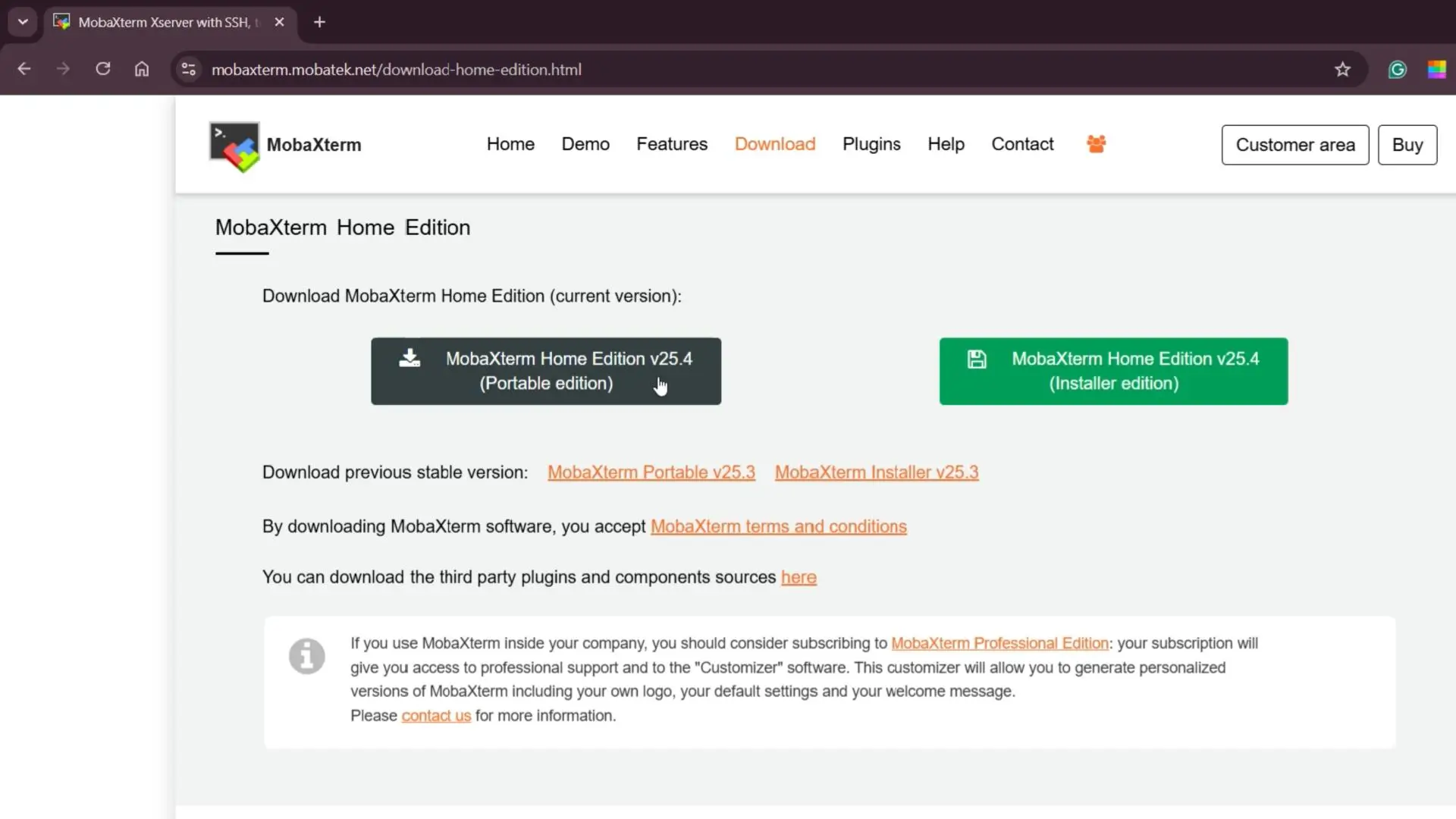

Go to this website

Head over to the official MobaXterm website and download the Portable Edition of the software.

It’s lightweight, it runs without installation, and it gives you everything you need; SSH, VNC, SFTP, and more; all in one place.

After you open the MobaXterm software; click on Session.

Now let’s go ahead and click on VNC inside MobaXterm.

Before connecting, make sure both your laptop and the RDK X5 are on the same WiFi network or connected to the same router.

This is important; otherwise, they simply won’t see each other.

Next, you will need the IP address of your RDK X5.

If you don’t already know it, don’t worry; there’s an easy way to find it.

Just open the Command Prompt on your laptop and type:

|

1 |

arp -a |

This command will show you a list of all the devices currently connected to your network, along with their IP addresses.

Look through the list, find the one that matches your RDK X5, and that’s the address you need.

Simply enter that IP address into the VNC window and press Enter.

And just like that; within a second; you will be connected to the RDK X5’s desktop.

No complicated networking, no technical jargon… it just works.

I already have the RDK Stereo Camera Module connected to the RDK X5 board.

What this stereo camera actually is, its complete specifications, and how to connect it properly to the RDK X5; I have already covered all of that in my Getting Started article on the RDK X5.

I have also connected the LED to pin 37, and if you want to learn how to control GPIO pins on the RDK X5, I explained everything in detail in my second article.

With that said… our entire setup is now ready to go.

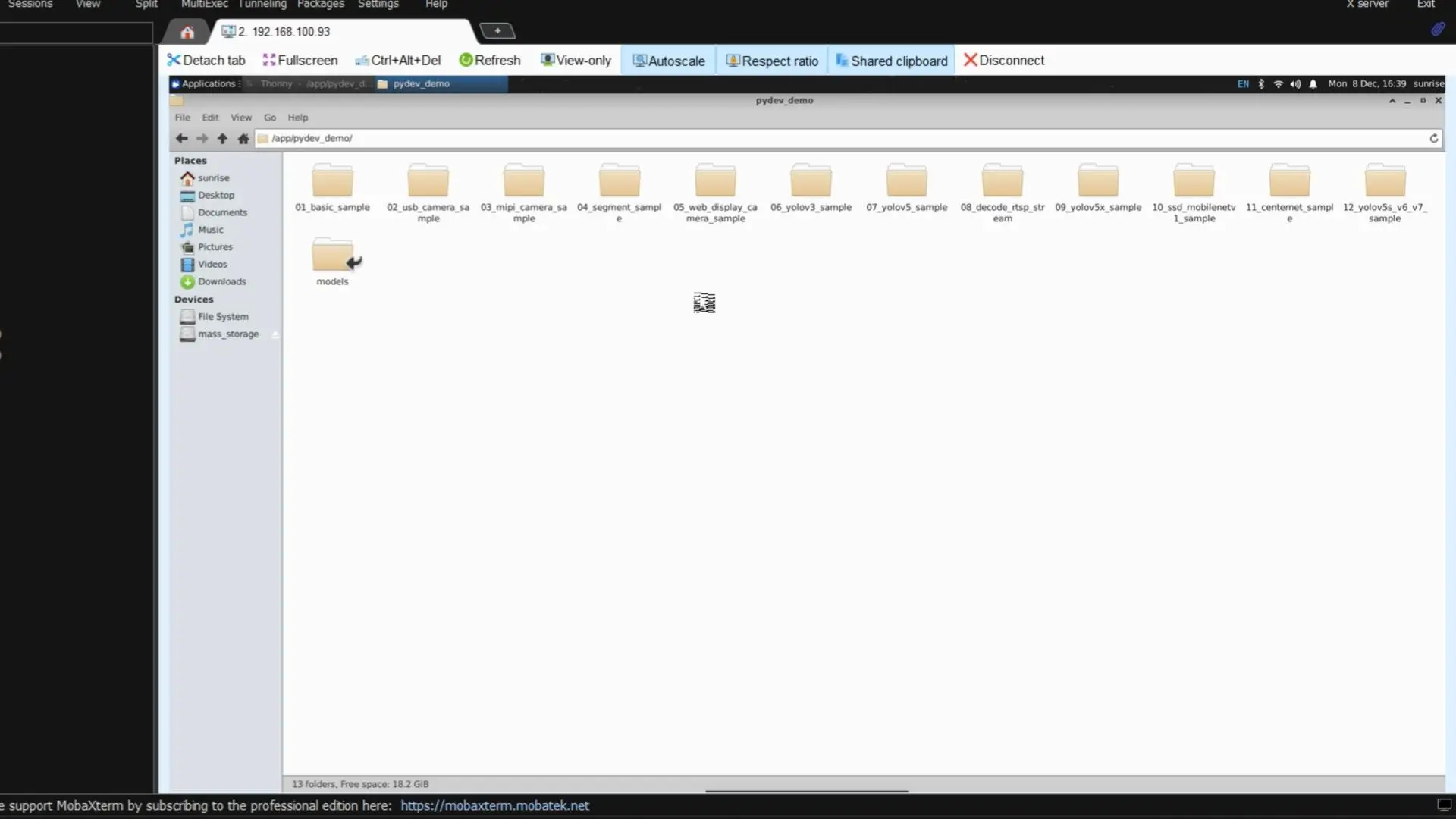

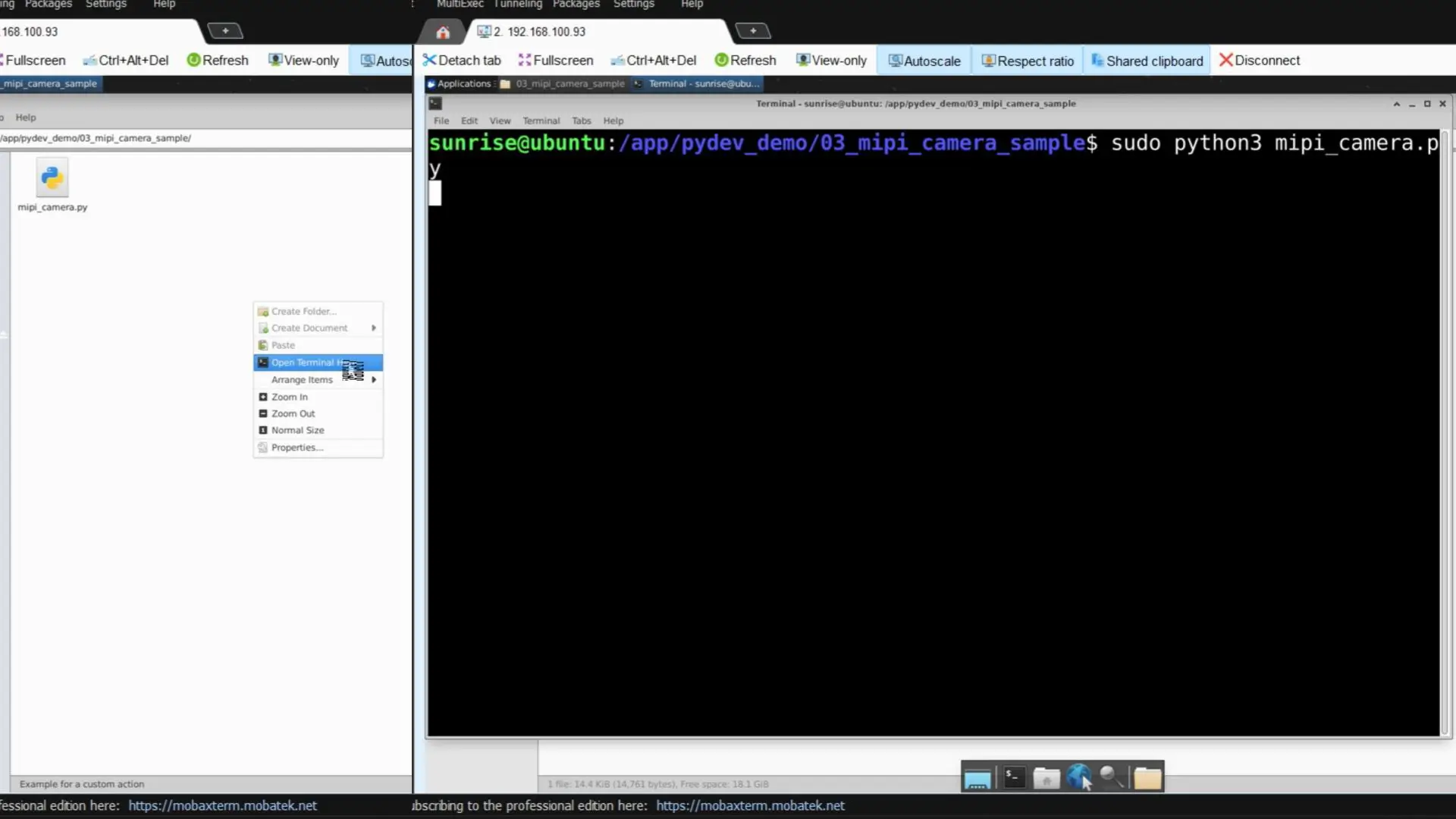

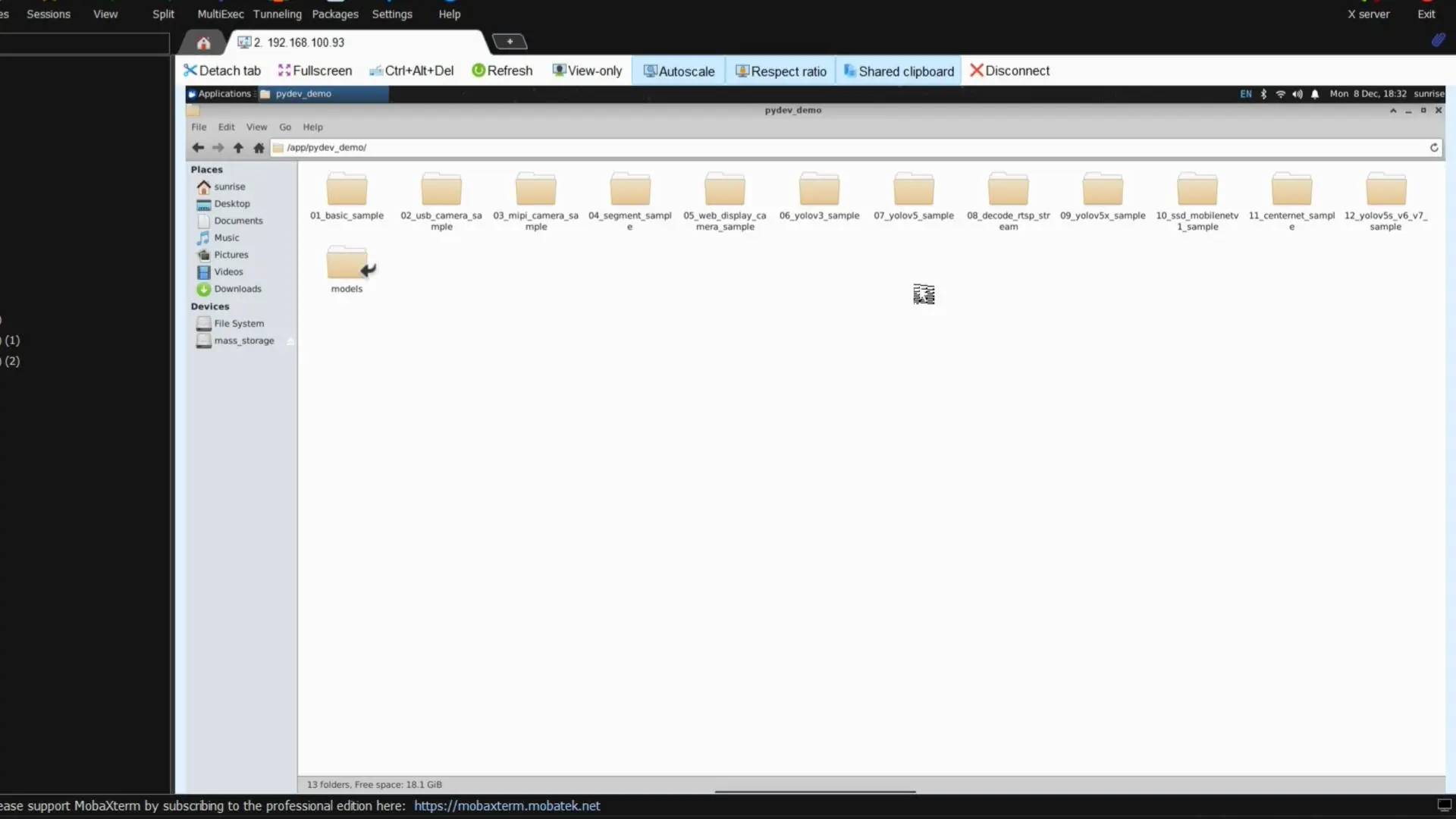

While you are still on the desktop, go ahead and open the File System.

From there, navigate to the app folder… and then open the pydev_demo directory.

Inside this folder, you will notice there are a lot of example projects; everything from simple tests to real-time video streaming demos. For our work, we are interested in the third folder, the one designed specifically for real-time streaming. So let’s open that.

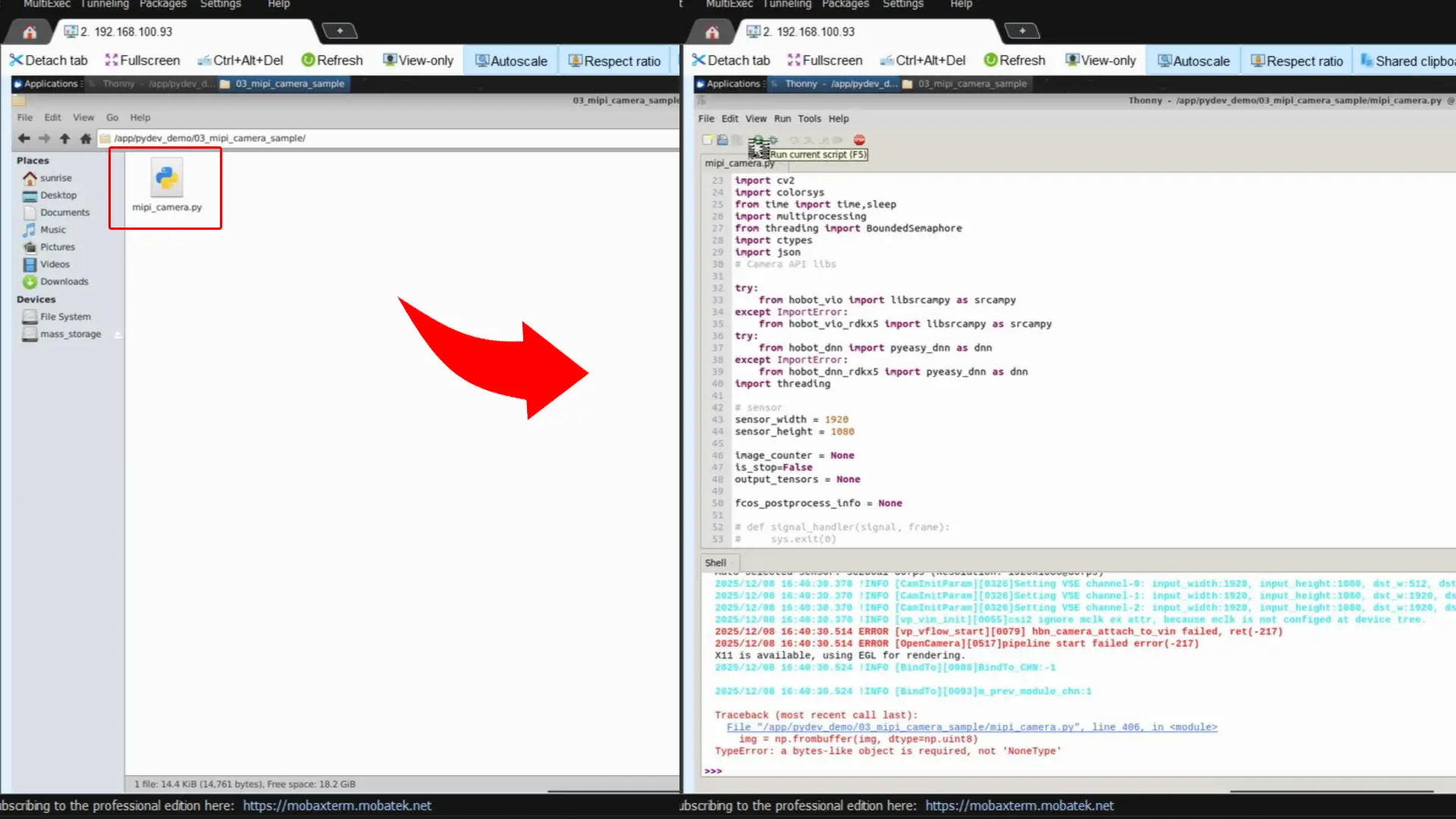

Now, here’s the interesting part.

If you try to open this Python file and run it directly from the interface; it won’t work.

As you can see in the image above.

As you can see, there’s no live video feed, no camera output, nothing happening.

That’s because this example must be executed through the terminal.

Right-click anywhere inside the folder and select “Open Terminal Here.”

This will open a terminal window already pointed to the correct directory, so we don’t need to type any long paths.

Now, to run the mipi_camera.py file, simply enter this command:

|

1 |

sudo python3 mipi_camera.py |

Hit Enter…

and now you will get proper, real-time video streaming from the RDK camera module—exactly the way this example is intended to run.

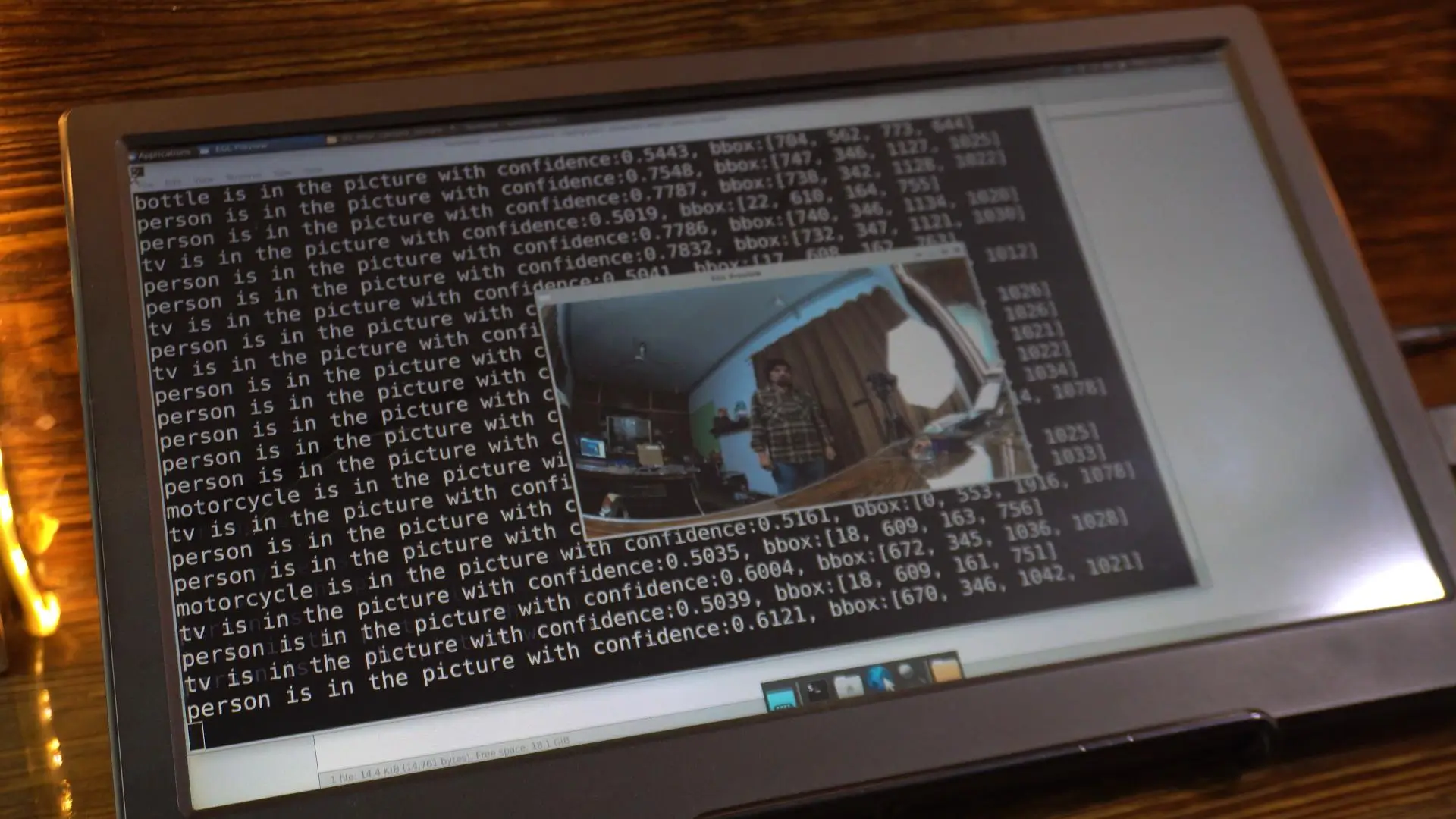

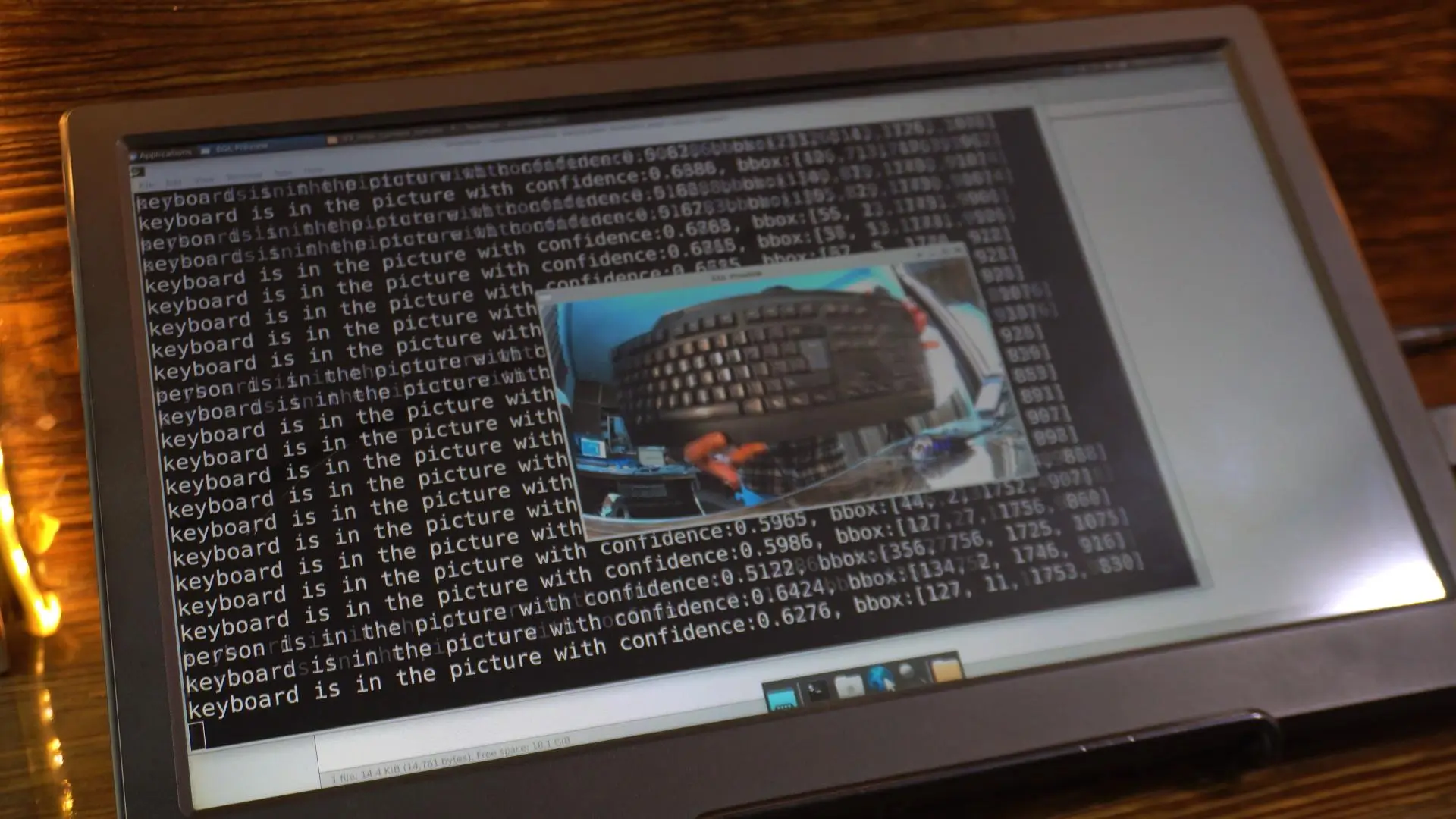

This code is capable of detecting all the objects listed inside the coco.names file. For example, since I am currently in front of the camera, its printing “person is in the picture.” And now If I hold a keyboard in front of it, the detection changes and it starts printing “keyboard.”

And you can try this with anything; place different objects in front of the camera and you will see the detections update in real time.

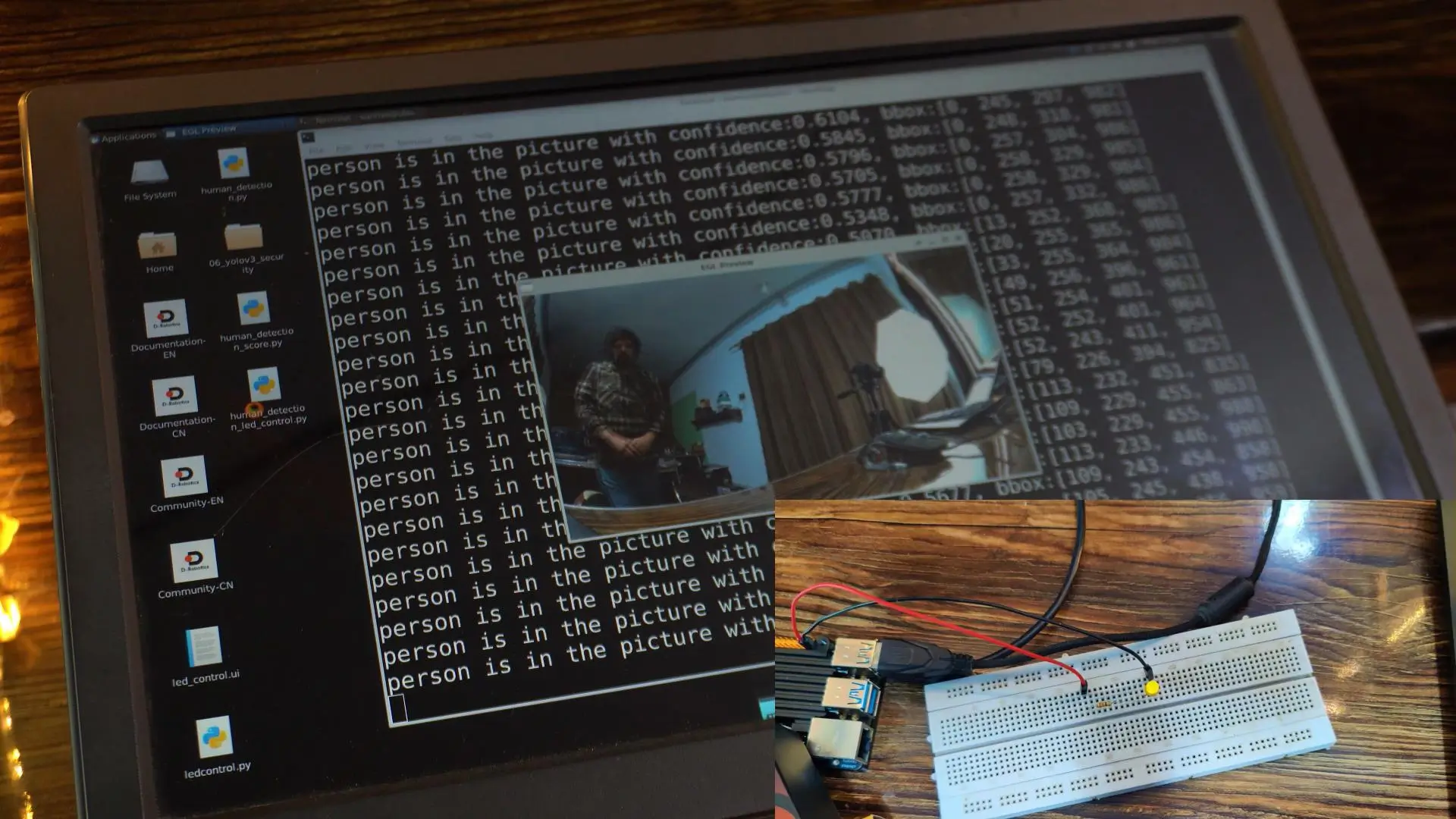

But my goal here is a little different.

I want the LED to turn ON only when a specific object appears.

For example, I want the LED to turn on only when the camera detects a person.

If it detects anything else keyboard, cup, bottle, whatever; the LED should remain OFF.

So I have modified the original code and made it much more user-friendly.

Now, all you have to do is type the name of the object you want to trigger the LED. That’s it.

Right now, I have set it to “person”, which means the LED will turn ON only when a human is detected.

Object Detection and LED Control:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 |

import sys import signal import os import numpy as np import cv2 import colorsys from time import time, sleep import multiprocessing from threading import BoundedSemaphore import ctypes import json import threading # --- GPIO IMPORT --- import Hobot.GPIO as GPIO # --- USER CONFIGURATION --- TARGET_OBJECT = "person" LED_PIN = 37 # Physical Pin 37 # -------------------------- # --- MODEL PATH CONFIGURATION --- MODEL_PATH = '/app/pydev_demo/models/fcos_512x512_nv12.bin' # -------------------------------- # Camera API libs try: from hobot_vio import libsrcampy as srcampy except ImportError: from hobot_vio_rdkx5 import libsrcampy as srcampy try: from hobot_dnn import pyeasy_dnn as dnn except ImportError: from hobot_dnn_rdkx5 import pyeasy_dnn as dnn # sensor sensor_width = 1920 sensor_height = 1080 image_counter = None is_stop = False output_tensors = None fcos_postprocess_info = None class hbSysMem_t(ctypes.Structure): _fields_ = [ ("phyAddr", ctypes.c_double), ("virAddr", ctypes.c_void_p), ("memSize", ctypes.c_int) ] class hbDNNQuantiShift_yt(ctypes.Structure): _fields_ = [ ("shiftLen", ctypes.c_int), ("shiftData", ctypes.c_char_p) ] class hbDNNQuantiScale_t(ctypes.Structure): _fields_ = [ ("scaleLen", ctypes.c_int), ("scaleData", ctypes.POINTER(ctypes.c_float)), ("zeroPointLen", ctypes.c_int), ("zeroPointData", ctypes.c_char_p) ] class hbDNNTensorShape_t(ctypes.Structure): _fields_ = [ ("dimensionSize", ctypes.c_int * 8), ("numDimensions", ctypes.c_int) ] class hbDNNTensorProperties_t(ctypes.Structure): _fields_ = [ ("validShape", hbDNNTensorShape_t), ("alignedShape", hbDNNTensorShape_t), ("tensorLayout", ctypes.c_int), ("tensorType", ctypes.c_int), ("shift", hbDNNQuantiShift_yt), ("scale", hbDNNQuantiScale_t), ("quantiType", ctypes.c_int), ("quantizeAxis", ctypes.c_int), ("alignedByteSize", ctypes.c_int), ("stride", ctypes.c_int * 8) ] class hbDNNTensor_t(ctypes.Structure): _fields_ = [ ("sysMem", hbSysMem_t * 4), ("properties", hbDNNTensorProperties_t) ] class FcosPostProcessInfo_t(ctypes.Structure): _fields_ = [ ("height", ctypes.c_int), ("width", ctypes.c_int), ("ori_height", ctypes.c_int), ("ori_width", ctypes.c_int), ("score_threshold", ctypes.c_float), ("nms_threshold", ctypes.c_float), ("nms_top_k", ctypes.c_int), ("is_pad_resize", ctypes.c_int) ] libpostprocess = ctypes.CDLL('/usr/lib/libpostprocess.so') get_Postprocess_result = libpostprocess.FcosPostProcess get_Postprocess_result.argtypes = [ctypes.POINTER(FcosPostProcessInfo_t)] get_Postprocess_result.restype = ctypes.c_char_p def get_TensorLayout(Layout): if Layout == "NCHW": return int(2) else: return int(0) def signal_handler(signal, frame): global is_stop print("Stopping!\n") is_stop = True # Clean up GPIO on exit GPIO.cleanup() sys.exit(0) def get_display_res(): disp_w_small = 1920 disp_h_small = 1080 disp = srcampy.Display() resolution_list = disp.get_display_res() if (sensor_width, sensor_height) in resolution_list: print(f"Resolution {sensor_width}x{sensor_height} exists in the list.") return int(sensor_width), int(sensor_height) else: print(f"Resolution {sensor_width}x{sensor_height} does not exist in the list.") for res in resolution_list: if res[0] == 0 and res[1] == 0: break else: disp_w_small = res[0] disp_h_small = res[1] if res[0] <= sensor_width and res[1] <= sensor_height: print(f"Resolution {res[0]}x{res[1]}.") return int(res[0]), int(res[1]) disp.close() return disp_w_small, disp_h_small disp_w, disp_h = get_display_res() def get_classes(): return np.array([ "person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic light", "fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow", "elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee", "skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard", "surfboard", "tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple", "sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch", "potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell phone", "microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear", "hair drier", "toothbrush" ]) def get_hw(pro): if pro.layout == "NCHW": return pro.shape[2], pro.shape[3] else: return pro.shape[1], pro.shape[2] def print_properties(pro): print("tensor type:", pro.tensor_type) print("data type:", pro.dtype) print("layout:", pro.layout) print("shape:", pro.shape) class ParallelExector(object): def __init__(self, counter, parallel_num=1): global image_counter image_counter = counter self.parallel_num = parallel_num if parallel_num != 1: self._pool = multiprocessing.Pool(processes=self.parallel_num, maxtasksperchild=5) self.workers = BoundedSemaphore(self.parallel_num) def infer(self, output): if self.parallel_num == 1: run(output) else: self.workers.acquire() self._pool.apply_async(func=run, args=(output, ), callback=self.task_done, error_callback=print) def task_done(self, *args, **kwargs): self.workers.release() def close(self): if hasattr(self, "_pool"): self._pool.close() self._pool.join() def limit_display_cord(coor): coor[0] = max(min(disp_w, coor[0]), 0) coor[1] = max(min(disp_h, coor[1]), 2) coor[2] = max(min(disp_w, coor[2]), 0) coor[3] = max(min(disp_h, coor[3]), 0) return coor def scale_bbox(bbox, input_w, input_h, output_w, output_h): scale_x = output_w / input_w scale_y = output_h / input_h x1 = int(bbox[0] * scale_x) y1 = int(bbox[1] * scale_y) x2 = int(bbox[2] * scale_x) y2 = int(bbox[3] * scale_y) return [x1, y1, x2, y2] def run(outputs): global image_counter global fcos_postprocess_info, output_tensors, disp, colors, start_time strides = [8, 16, 32, 64, 128] for i in range(len(strides)): if (output_tensors[i].properties.quantiType == 0): output_tensors[i].sysMem[0].virAddr = ctypes.cast(outputs[i].ctypes.data_as(ctypes.POINTER(ctypes.c_float)), ctypes.c_void_p) output_tensors[i + 5].sysMem[0].virAddr = ctypes.cast(outputs[i + 5].ctypes.data_as(ctypes.POINTER(ctypes.c_float)), ctypes.c_void_p) output_tensors[i + 10].sysMem[0].virAddr = ctypes.cast(outputs[i + 10].ctypes.data_as(ctypes.POINTER(ctypes.c_float)), ctypes.c_void_p) else: output_tensors[i].sysMem[0].virAddr = ctypes.cast(outputs[i].ctypes.data_as(ctypes.POINTER(ctypes.c_int32)), ctypes.c_void_p) output_tensors[i + 5].sysMem[0].virAddr = ctypes.cast(outputs[i + 5].ctypes.data_as(ctypes.POINTER(ctypes.c_int32)), ctypes.c_void_p) output_tensors[i + 10].sysMem[0].virAddr = ctypes.cast(outputs[i + 10].ctypes.data_as(ctypes.POINTER(ctypes.c_int32)), ctypes.c_void_p) libpostprocess.FcosdoProcess(output_tensors[i], output_tensors[i + 5], output_tensors[i + 10], ctypes.pointer(fcos_postprocess_info), i) result_str = get_Postprocess_result(ctypes.pointer(fcos_postprocess_info)) result_str = result_str.decode('utf-8') data = json.loads(result_str[14:]) # --- FILTERING LOGIC --- filtered_data = [item for item in data if item['name'] == TARGET_OBJECT] # === LED CONTROL LOGIC START === if len(filtered_data) > 0: # Object detected -> LED ON try: GPIO.output(LED_PIN, GPIO.HIGH) except Exception as e: pass # Suppress GPIO errors inside loop to prevent crash else: # Object NOT detected -> LED OFF try: GPIO.output(LED_PIN, GPIO.LOW) except Exception as e: pass # === LED CONTROL LOGIC END === if len(filtered_data) == 0: # Clear screen disp.set_graph_rect(0, 0, 0, 0, 3, 1, 0) else: for index, result in enumerate(filtered_data): bbox = result['bbox'] score = result['score'] id = int(result['id']) name = result['name'] bbox = scale_bbox(bbox, 512, 512, disp_w, disp_h) coor = limit_display_cord(bbox) coor = [round(i) for i in coor] score = float(score) bbox_string = '%s: %.2f' % (name, score) bbox_string = bbox_string.encode('gb2312') box_color = colors[id] color_base = 0xFF000000 box_color_ARGB = color_base | (box_color[0]) << 16 | (box_color[1]) << 8 | (box_color[2]) print("{} is in the picture with confidence:{:.4f}, bbox:{}".format(name, score, coor)) if index == 0: disp.set_graph_rect(coor[0], coor[1], coor[2], coor[3], 3, 1, box_color_ARGB) disp.set_graph_word(coor[0], coor[1] - 2, bbox_string, 3, 1, box_color_ARGB) else: disp.set_graph_rect(coor[0], coor[1], coor[2], coor[3], 3, 0, box_color_ARGB) disp.set_graph_word(coor[0], coor[1] - 2, bbox_string, 3, 0, box_color_ARGB) with image_counter.get_lock(): image_counter.value += 1 if image_counter.value == 100: finish_time = time() print(f"Total time cost for 100 frames: {finish_time - start_time}, fps: {100/(finish_time - start_time)}") if __name__ == '__main__': signal.signal(signal.SIGINT, signal_handler) # === GPIO SETUP === GPIO.setwarnings(False) GPIO.setmode(GPIO.BOARD) GPIO.setup(LED_PIN, GPIO.OUT) GPIO.output(LED_PIN, GPIO.LOW) # Start OFF print(f"GPIO Setup Complete. Controlling LED on Pin {LED_PIN} based on {TARGET_OBJECT} detection.") # ================== # CHECK IF MODEL EXISTS BEFORE CRASHING if not os.path.exists(MODEL_PATH): print(f"\n[ERROR] Model file not found at: {MODEL_PATH}") print(f"Please search for 'fcos_512x512_nv12.bin' on your board and update line 26.\n") GPIO.cleanup() sys.exit(1) models = dnn.load(MODEL_PATH) print("--- model input properties ---") print_properties(models[0].inputs[0].properties) print("--- model output properties ---") for output in models[0].outputs: print_properties(output.properties) fcos_postprocess_info = FcosPostProcessInfo_t() fcos_postprocess_info.height = 512 fcos_postprocess_info.width = 512 fcos_postprocess_info.ori_height = disp_h fcos_postprocess_info.ori_width = disp_w fcos_postprocess_info.score_threshold = 0.5 fcos_postprocess_info.nms_threshold = 0.6 fcos_postprocess_info.nms_top_k = 5 fcos_postprocess_info.is_pad_resize = 0 output_tensors = (hbDNNTensor_t * len(models[0].outputs))() for i in range(len(models[0].outputs)): output_tensors[i].properties.tensorLayout = get_TensorLayout(models[0].outputs[i].properties.layout) if (len(models[0].outputs[i].properties.scale_data) == 0): output_tensors[i].properties.quantiType = 0 else: output_tensors[i].properties.quantiType = 2 scale_data_tmp = models[0].outputs[i].properties.scale_data.reshape(1, 1, 1, models[0].outputs[i].properties.shape[3]) output_tensors[i].properties.scale.scaleData = scale_data_tmp.ctypes.data_as(ctypes.POINTER(ctypes.c_float)) for j in range(len(models[0].outputs[i].properties.shape)): output_tensors[i].properties.validShape.dimensionSize[j] = models[0].outputs[i].properties.shape[j] output_tensors[i].properties.alignedShape.dimensionSize[j] = models[0].outputs[i].properties.shape[j] cam = srcampy.Camera() h, w = get_hw(models[0].inputs[0].properties) input_shape = (h, w) cam.open_cam(0, -1, -1, [w, disp_w], [h, disp_h], sensor_height, sensor_width) disp = srcampy.Display() disp.display(0, disp_w, disp_h) srcampy.bind(cam, disp) disp.display(3, disp_w, disp_h) classes = get_classes() num_classes = len(classes) hsv_tuples = [(1.0 * x / num_classes, 1., 1.) for x in range(num_classes)] colors = list(map(lambda x: colorsys.hsv_to_rgb(*x), hsv_tuples)) colors = list(map(lambda x: (int(x[0] * 255), int(x[1] * 255), int(x[2] * 255)), colors)) start_time = time() image_counter = multiprocessing.Value("i", 0) parallel_exe = ParallelExector(image_counter, parallel_num=1) try: while not is_stop: cam_start_time = time() img = cam.get_img(2, 512, 512) cam_finish_time = time() buffer_start_time = time() img = np.frombuffer(img, dtype=np.uint8) buffer_finish_time = time() infer_start_time = time() outputs = models[0].forward(img) infer_finish_time = time() output_array = [] for item in outputs: output_array.append(item.buffer) parallel_exe.infer(output_array) except Exception as e: print(e) finally: cam.close_cam() disp.close() GPIO.cleanup() |

First, we will test it with a person…and after that, we will test it again using “car” to show how easily you can switch objects and change the behavior.

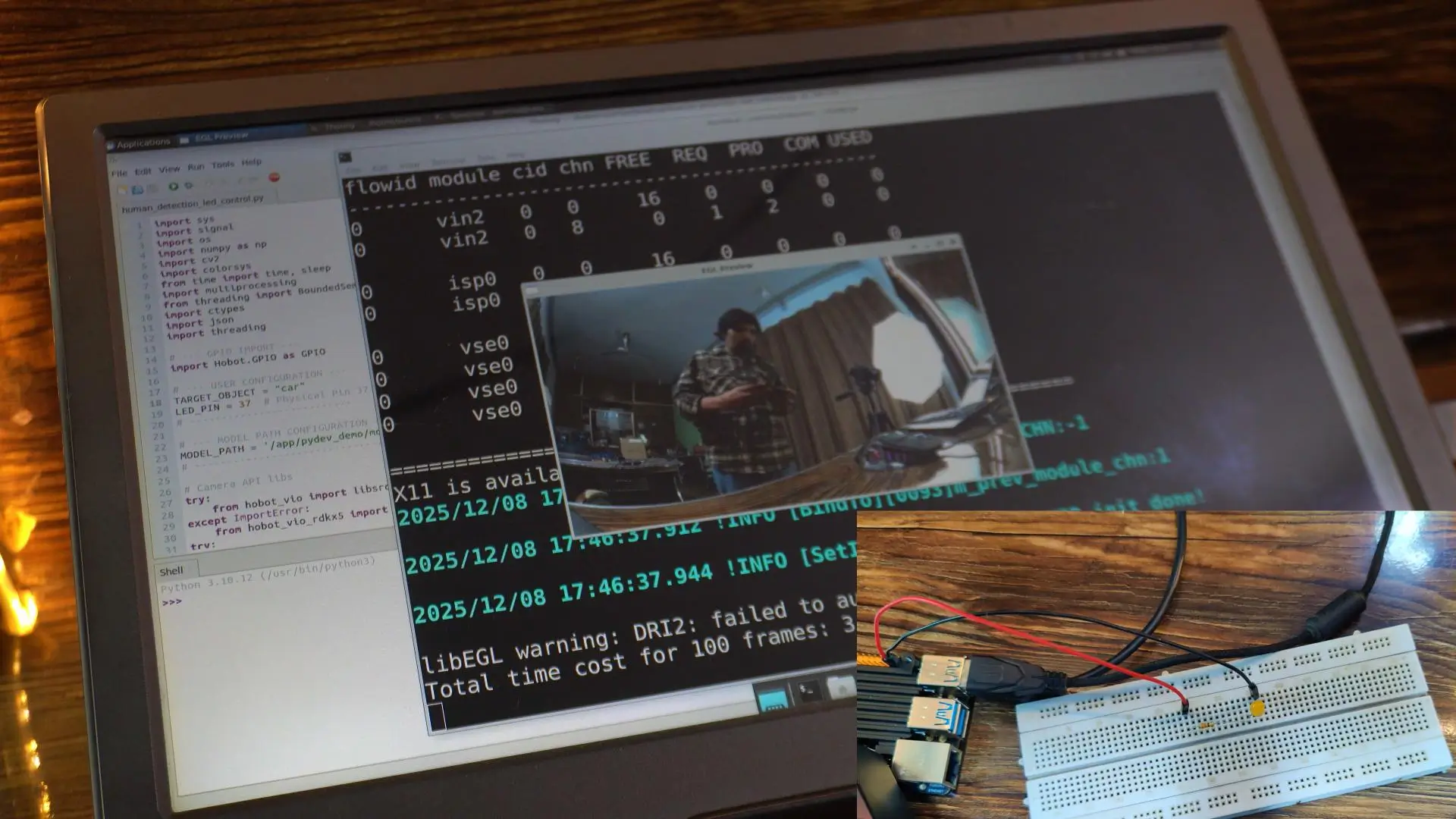

Alright, let’s go ahead and run this code.

As you can see, the LED is currently OFF, because there is no one in front of the camera.

Now watch this; the moment I step into the frame…

the LED instantly turns ON.

This is mind-blowing.

Real-time object detection, controlling physical hardware; all happening directly on the RDK X5.

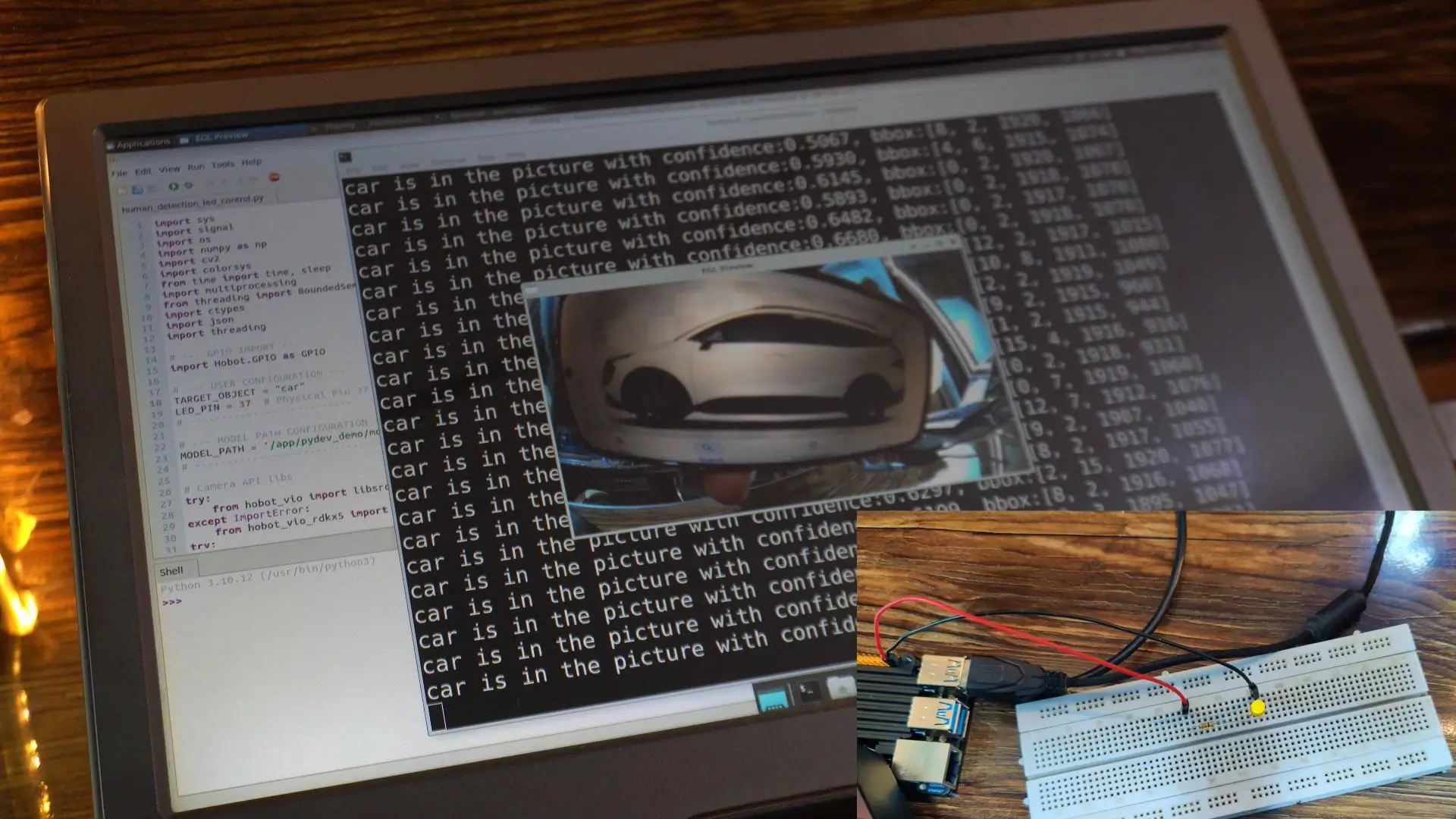

Now, let’s take it one step further and test it with “car.”

I have changed the target object from person to car, and look at this:

Even though I am right here in the frame, the LED does NOT turn on anymore.

It will only turn on when the camera detects a car.

This level of precision is absolutely amazing.

And that’s not all; I have written several more examples that you can try out yourself.

All these additional codes are available on my Patreon.

You will also find multiple YOLO-related samples inside the RDK X5.

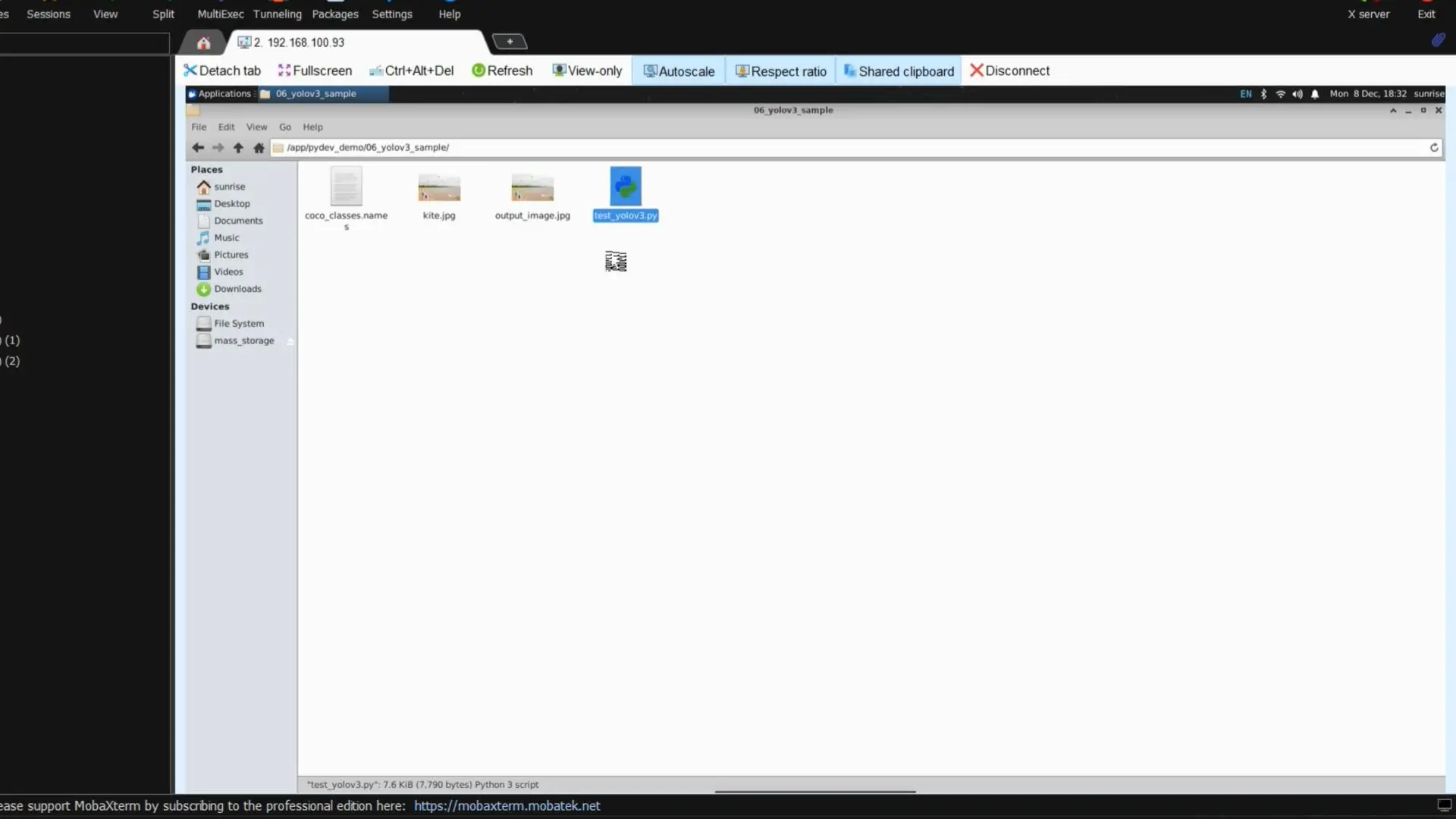

Open the 6th folder — Yolov3_sample.

This example detects objects inside a static image called kite.jpg.

When I ran the script, it produced this output image…

And just look at the accuracy.

YOLO v3 has identified all the objects with impressive confidence.

Now, if you have followed my earlier work, you will remember that I once used YOLO v3 with the ESP32-Camera module…

and on top of that, I built a complete virtual laser security system using Python, OpenCV, and MediaPipe pose landmarks.

No PIR sensors.

No ultrasonic sensors.

No physical wires or tripwires.

Just pure AI.

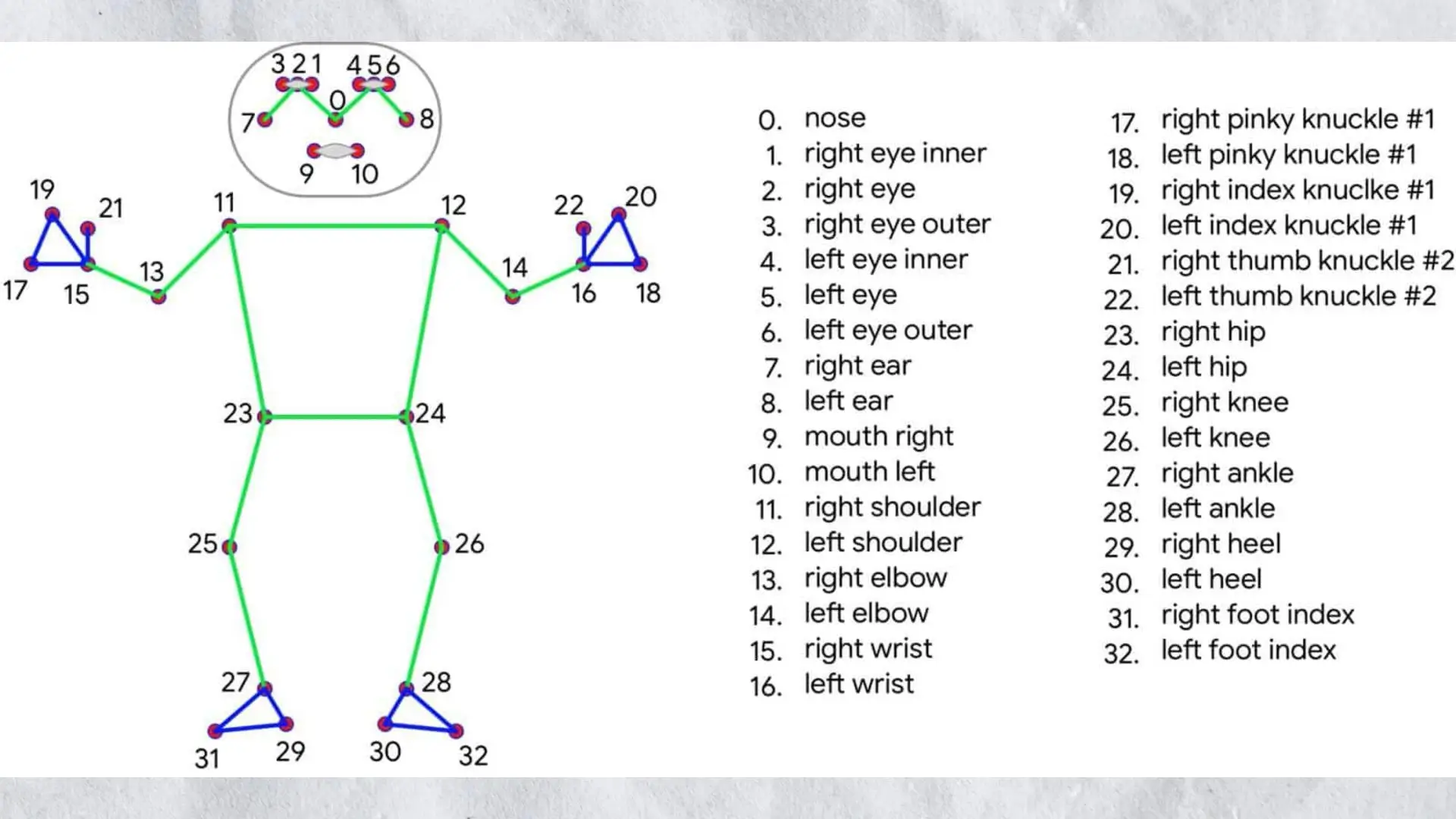

MediaPipe gives you 33 highly accurate body landmarks, and by tracking even a single one—like landmark 31, which we used last time—I was able to detect when someone crossed a line or entered a restricted zone with insane accuracy.

And now…

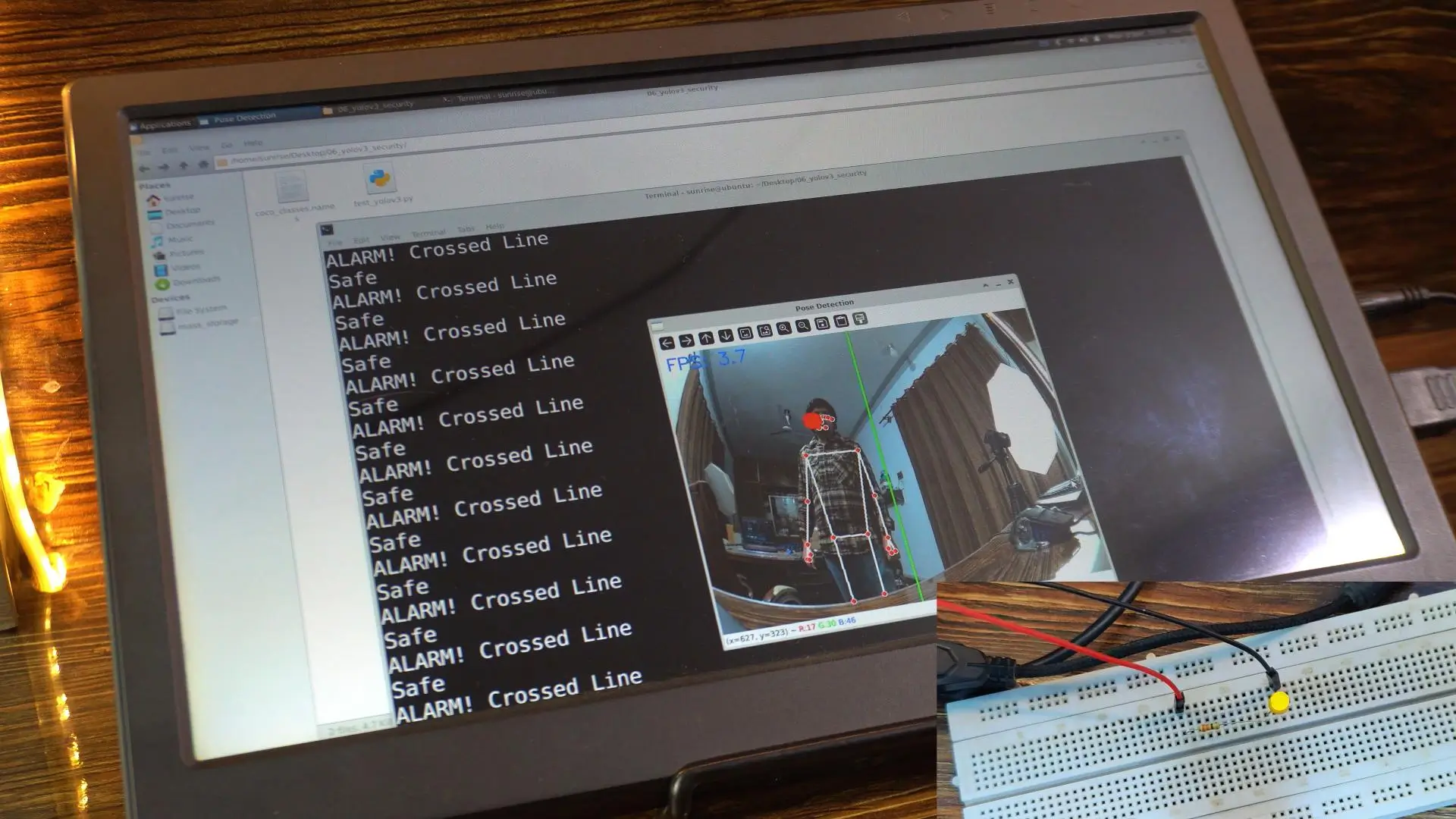

I have implemented that same concept on the RDK X5 as well.

Think of that green line on the screen as our virtual laser.

And the best part?

You are not limited to a straight line. You can draw complex regions, custom shapes, entire zones; and then track whether a person or object enters them.

Right now, one side of the line is the Normal Area, and the other side is the Prohibited Area.

As you can see, I am standing in the normal area, so the LED is OFF.

Zero false triggering.

Zero noise.

Pure logic.

But the moment I step into the prohibited area…

The LED turns ON.

And it will stay ON as long as I remain inside this restricted zone.

The moment I step back into the normal area…

The LED switches OFF again.

This is exactly how virtual security systems should behave; precise, reliable, and fully customizable.

Applications of RDK X5 Object Detection

- AI-powered security and surveillance systems

- Smart home automation using camera-based detection

- Industrial safety monitoring

- Road safety monitoring

- Access control and intrusion detection

- Robotics and autonomous systems

Conclusion: RDK X5 Object Detection Made Easy

In this guide, we successfully implemented RDK X5 object detection using a camera and landmarks from YOLO deep learning models.

By combining real-time AI vision with GPIO control, we created a powerful automation and security system that runs entirely on the RDK X5.

This approach opens the door to advanced AI-powered projects such as smart surveillance, access control, and robotics applications.

Frequently Asked Questions About RDK X5 Object Detection

Can RDK X5 run real-time object detection?

Yes, the RDK X5 is capable of running real-time object detection using optimized YOLO models, making it suitable for AI vision and automation applications.

Is YOLO supported on RDK X5?

YOLO models can be deployed on RDK X5 using compatible AI frameworks, enabling fast and accurate object detection from camera input.

Which camera works best for RDK X5 object detection?

USB cameras and stereo cameras officially supported by RDK X5 work well for object detection, especially when using real-time video streams.

Can RDK X5 control hardware using object detection?

Yes, detected objects can trigger GPIO outputs on RDK X5, allowing you to control LEDs, relays, buzzers, or other hardware devices automatically.

If you want the complete code, I have uploaded all the scripts, resources, and project files on Patreon. Your support truly means a lot; thank you!

So; that’s all for now.

Watch Video Tutorial:

Discover more from Electronic Clinic

Subscribe to get the latest posts sent to your email.